Memcache is often used on Drupal sites as a high-performance in-memory cache. This allows Drupal to offload some cached items from the database to physical memory, reducing access time and improving performance.

Generally, Memcache (or memcached, as it’s sometimes called) is pretty easy to install. Most distributions of Linux support it in their default package manager. Once installed, often you only need to tell it how much memory to use, which port to use, and start it up. Configuring Drupal to use Memcache via the Memcache module, is also rather simple:

$settings['memcache']['servers'] = [

'memcache.mydomain:11211' => 'default',

];

$settings['memcache']['bins'] = ['default' => 'default'];

$settings['memcache']['key_prefix'] = '';

$settings['cache']['default'] = 'cache.backend.memcache';

Add the above to your settings.php, rebuild the cache, and you’re using Memcache.

Or Are You!?

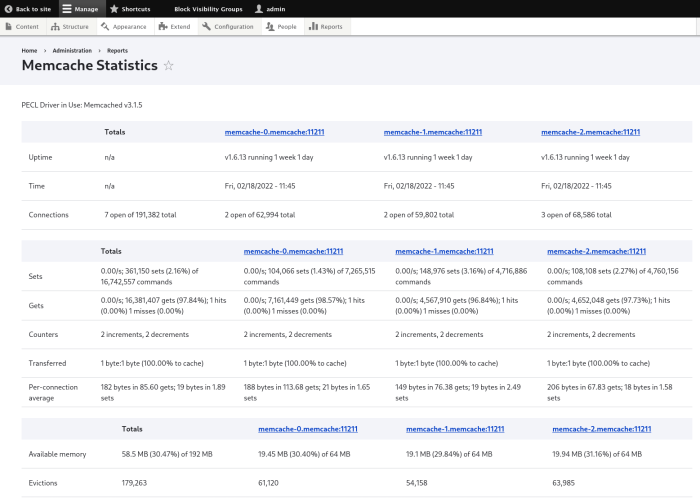

The thing is, when it’s working correctly, you often see little difference in the site operation other than the subjective feeling of “it’s faster.” How can we be really sure that Drupal is sending data to Memcache? The Memcache module has a built-in feature to assure you, the Memcache Admin submodule. Part of the Memcache module, this module provides a report to inspect the status of your Memcache server(s):

Navigate to Admin > Reports > Memcache Statistics and you’ll be greeted with details about how much memory is being used, how many items are cached, and how many of those items were removed (”evicted”).

For most Drupal users, the Memcache Statistics page is good enough to determine if Memcache is working as expected.

Scaling Out

As a site becomes more complex or has more demands placed upon it, you may need to scale up your Memcache deployment. You can obviously add more memory (”scaling up), but what would really help is to add more Memcache servers (”scaling out”). The Memcache module makes this pretty easy to support, since you can add multiple servers to your settings.php:

$settings['memcache']['servers'] = [

'memcache-0.mydomain:11211' => 'default',

'memcache-1.mydomain:11211' => 'default',

'memcache-2.mydomain:11211' => 'default',

];

$settings['memcache']['bins'] = ['default' => 'default'];

$settings['memcache']['key_prefix'] = '';

$settings['cache']['default'] = 'cache.backend.memcache';

There’s no need for a load balancer in this setup, as the Memcache module will take care of distributing the cache items consistently throughout the cluster.

Scaling Out Even More

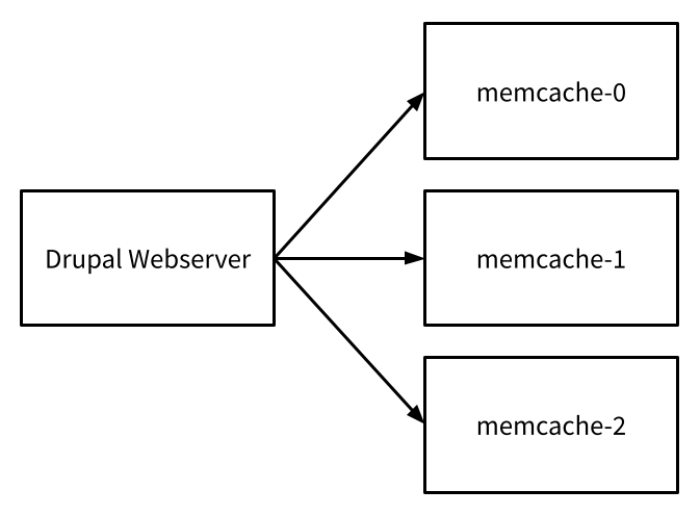

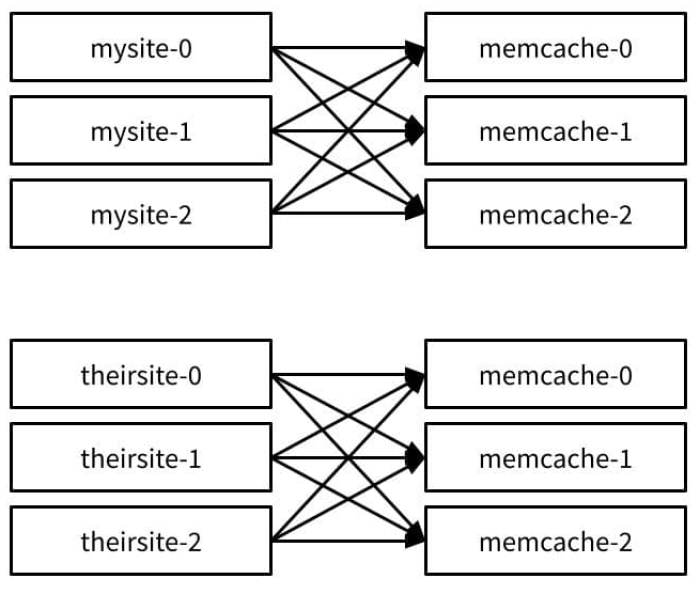

Things get more complicated when you also decide to scale out your Drupal web server. With one web server, the Memcache network connections are finite and controlled:

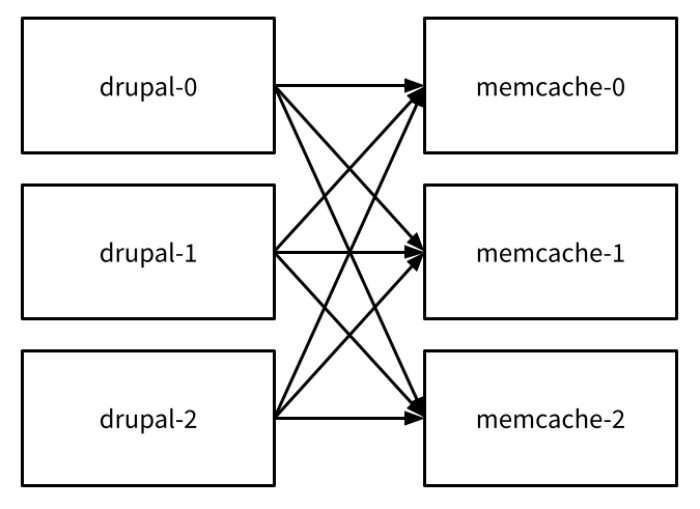

The connection count is m, or the number of the Memcache servers. When you add multiple Drupal web servers, on the other hand, the network situation explodes:

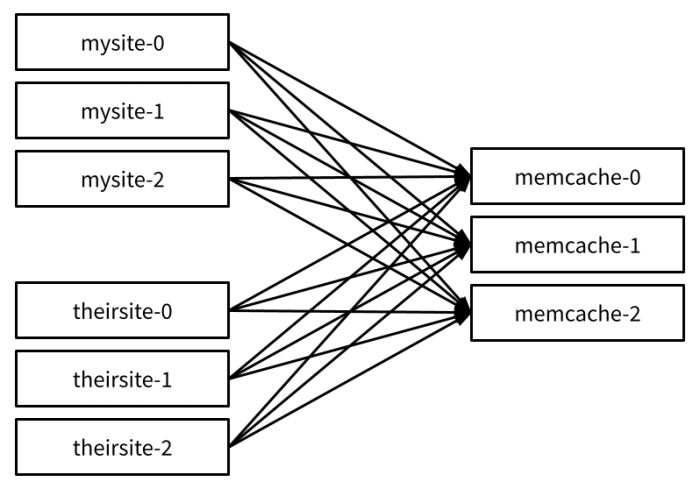

Now the connection count is d * m, or the number of Drupal web servers d times the number of Memcache instances m. That might seem okay if you’re only hosting one Drupal web site on your infrastructure, but the situation becomes more complicated when you host multiple sites:

Now you have a connection count which is the number of sites, times the number of replicas per site, times the number of Memcache replicas. This can quickly overwhelm a server’s connection count limit, causing the entire cluster of sites to go down or behave erratically.

One way you could mitigate this is to set up multiple sets of Memcache servers, one per site:

This keeps the connection counts at a more manageable number, but now you have a lot more servers to operate. This can be cost intensive on traditional infrastructure, or IaaS such as Amazon EC2.

K8s and Overprovisioning

In a container-centric environment such as Kubernetes, this may be acceptable. While each container allocates memory, there’s an overall limit to the memory available in the cluster. The problem here becomes overprovisioning.

As you add more and more sites to the cluster, you’re allocating a new set of Memcache instances per site so as to avoid connection count limitations. Each Memcache container now takes up additional space to do the same thing, and not all of the memory allocated to each Memcache container is allocated completely per-site. This leads to otherwise spoken for memory going unused. It’s like if I had a cooler full of water (the total cluster memory), and gave out full glasses to each site (memory allocation per site), only for them to drink a third to a half of their glass. That leaves a lot of thirsty websites that have nothing left to drink!

Multi-Tenancy and Proxying

To combat these issues, often we turn to two solutions: multi-tenancy, and proxying.

It’s pretty easy to share multiple Drupal sites on a single Memcache server by setting a unique prefix for each site. You can do this in settings.php:

$settings['memcache']['key_prefix'] = 'mysite';

If you’re sharing the same database server for all the sites, a convenient unique key to use is the database name for each site. Note that this isn’t the most secure set up, as a compromised site could simply lie about their prefix and nab another site’s cached items, but that’s out of scope for this post.

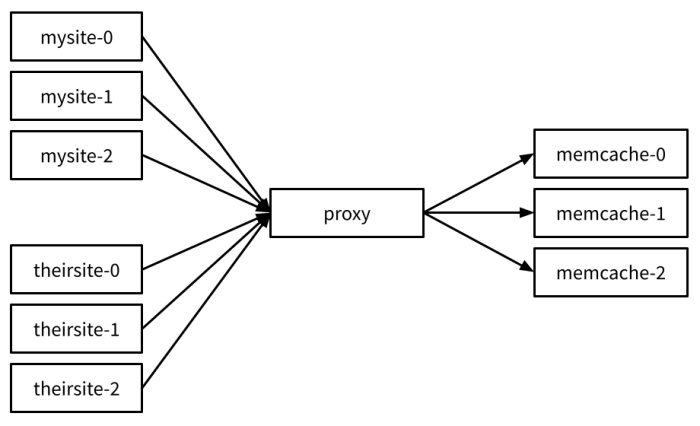

That gives us multi-tenancy, but what about proxying? Proxying allows us to limit the connection count as it creates a single point at which all the connections go:

There are several different options to proxy Memcache. The longest and most commonly used is still Mcrouter, although at TEN7 we settled on Twemproxy. While we use Memcache for most of our sites, there are some where Redis is a more appropriate, or preferred choice. This gives Twemproxy an advantage over Mcrouter as it supports both Memcache and Redis on the same proxy software, and even the same server instances!

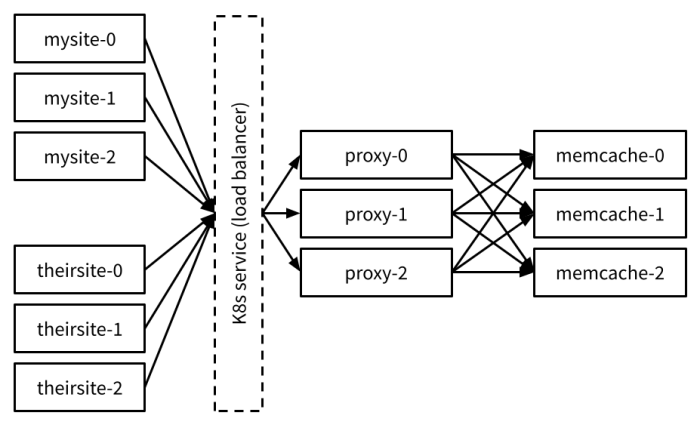

To reduce connections further, we can also scale out the proxy and put a transparent network proxy in front of that. Twemproxy uses a consistent distribution algorithm so that cached items always go to the same underlying Memcache or Redis instance. In Kubernetes, this load balancer would be a Service definition.

Combined, this reduces connection count, as well as adds redundancy while reducing unused memory usage.

But How Do You Test It?

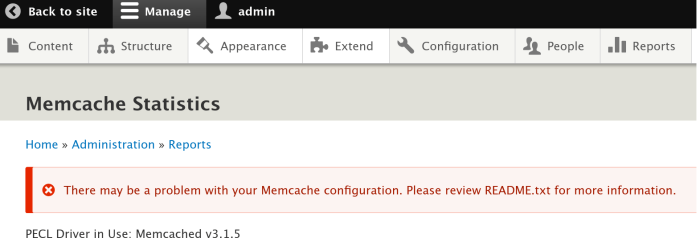

While Twemproxy is great, it does have some limitations. As stated above, Memcache is usually tested on a Drupal site using the Memcache Statistics module. If we do the same thing with Twemproxy, however, we’re greeted with a most unfortunate screen:

Looking at this, you might think, “Obviously, this isn’t working” and spend a lot of additional time troubleshooting why. A natural way forward to see if Twemproxy is working is by setting a cache value, and retrieving it manually.

There’s a number of different ways to do this, but the easiest way is to use the telnet command. Telnet is a remote terminal application which is built into many operating systems, so you may have it on your computer already. We can use telnet because Memcache is a text only protocol, and Twemproxy implements (most of) the Memcache protocol.

telnet twemproxy-0 22122

Connected to localhost.

Escape character is '^]'.

Once we’re connected, we can set a key-value pair:

set my_test_key 0 100 7

testing

STORED.

The STORED indicates that the key was saved. Now we can retrieve it:

get my_test_key

VALUE my_test_key 0 7

testing

END

We got the value back! That means Twemproxy appears to be working. There several more commands we can try, but what we’d really like to do is get statistics:

stats items

Connection closed by foreign host.

Gah! What happened!? As soon as we tried to get stats, Twemproxy bails out and closes the connection. If we try to reconnect, however, we’ll find our test key is still there (provided we do before the entry’s TTL of 100 expires). So we know that Twemproxy is still functioning and not crashed.

It turns out that Twemproxy does not support all of Memcache’s commands, most notably, the command that displays statistics. This actually makes sense. Twemproxy would need to aggregate the stats over all the Memcache instances it supports, or it would return stats from a different instance each time. Neither is useful, so Twemproxy doesn’t support the command. This explains why the Memcache Statistics report doesn’t work.

Getting Stats The Hard Way

So how do we get stats out of the Memcache instances? It turns out, we can do the same thing as we tried above, but we connect directly to a particular Memcache instance instead:

telnet memcache-0 11211

Connected to localhost.

Escape character is '^]'.

stats items

STAT items:2:number 12

STAT items:2:number_hot 0

STAT items:2:number_warm 4

STAT items:2:number_cold 8

STAT items:2:age_hot 0

STAT items:2:age_warm 1966

STAT items:2:age 1992

STAT items:2:mem_requested 1416

STAT items:2:evicted 0

STAT items:2:evicted_nonzero 0

…

The total output was clipped from the above, since it’s a very long list! We can even get a particular cache entry’s values using the stats cachedump command:

stats cachedump 2 100

ITEM memcache_bin_timestamps%3A-discovery [13 b; 0 s]

ITEM memcache_bin_timestamps%3A-config [14 b; 0 s]

ITEM memcache_bin_timestamps%3A-data [14 b; 0 s]

ITEM memcache_bin_timestamps%3A-toolbar [14 b; 0 s]

ITEM memcache_bin_timestamps%3A-rest [14 b; 0 s]

ITEM memcache_bin_timestamps%3A-migrate [14 b; 0 s]

ITEM memcache_bin_timestamps%3A-library [14 b; 0 s]

ITEM memcache_bin_timestamps%3A-file_mdm [14 b; 0 s]

END

Summary

Key-value stores like Memcache are a great way to enhance Drupal’s performance. Scaling the functionality out, however, requires proxying to reduce network connections, multiple instances to improve redundancy, and multi-tenancy to make more effective use of available memory.

When implemented, it’s easy to get frustrated and think the caching setup is broken due to conventional tools not working. With a little effort, however, we can confirm that caching is working using freely available tools.